Calibration process

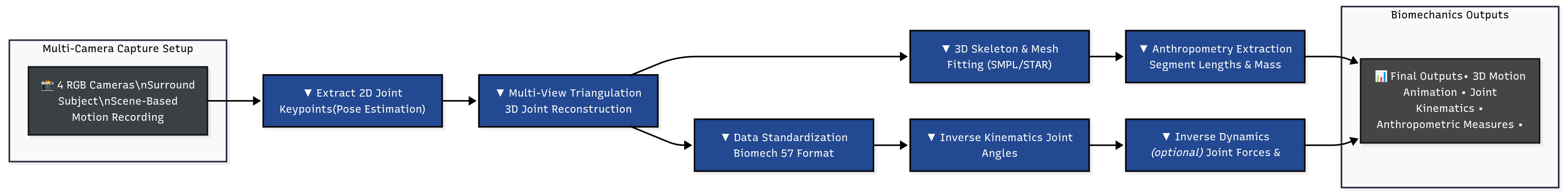

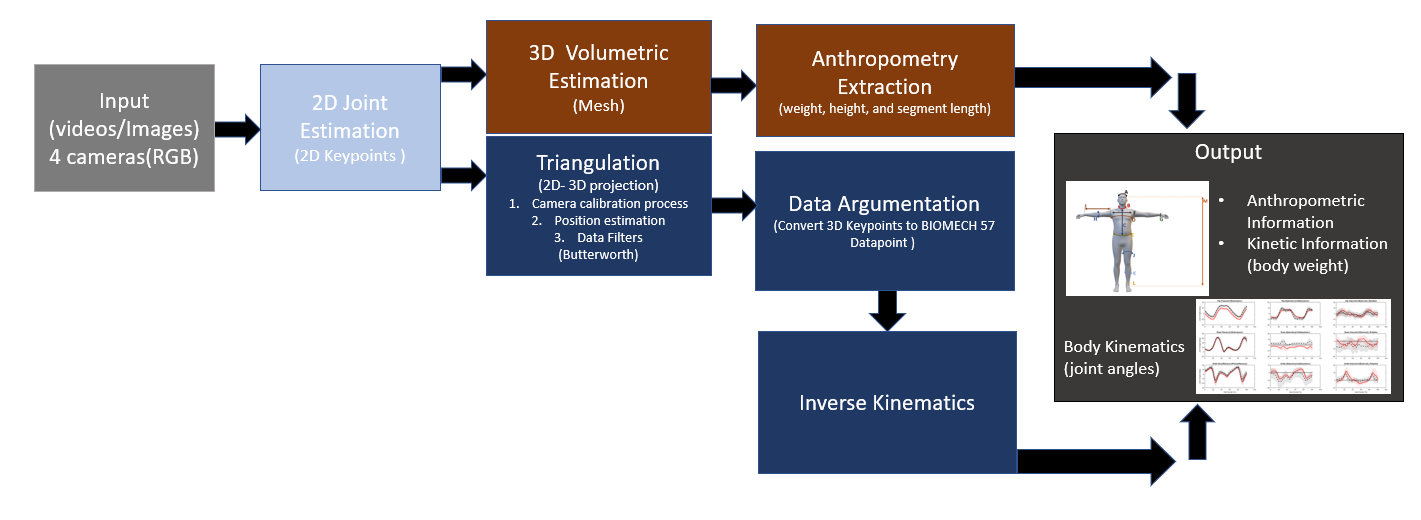

PoseMech is structured as a multi-stage computer vision and biomechanical inference pipeline. The system begins with a deep learning–based 2D convolutional neural network (CNN) pose estimator that extracts body keypoints from monocular RGB video. These 2D landmarks are temporally stabilized and passed to a 3D reconstruction module, where depth-aware kinematic structure is inferred using geometric constraints and learned pose priors to generate a coherent 3D skeletal representation. The reconstructed pose is then mapped into a biomechanical computation layer consisting of 57 anatomically structured landmarks. These landmarks define segment coordinate systems for the trunk, pelvis, upper and lower extremities, enabling calculation of joint angles, segment orientations, and task-specific kinematic metrics. Using this articulated landmark model, PoseMech estimates joint flexion/extension angles and derives workload-relevant biomechanical indicators through kinematic transformation and inverse-dynamics–inspired approximations. By integrating 2D CNN perception, 3D pose reconstruction, and a structured 57-landmark biomechanical model, PoseMech converts raw visual motion into quantitative ergonomic assessments. This layered architecture enables contactless estimation of biomechanical workload while maintaining anatomical interpretability and computational scalability for real-world deployment.

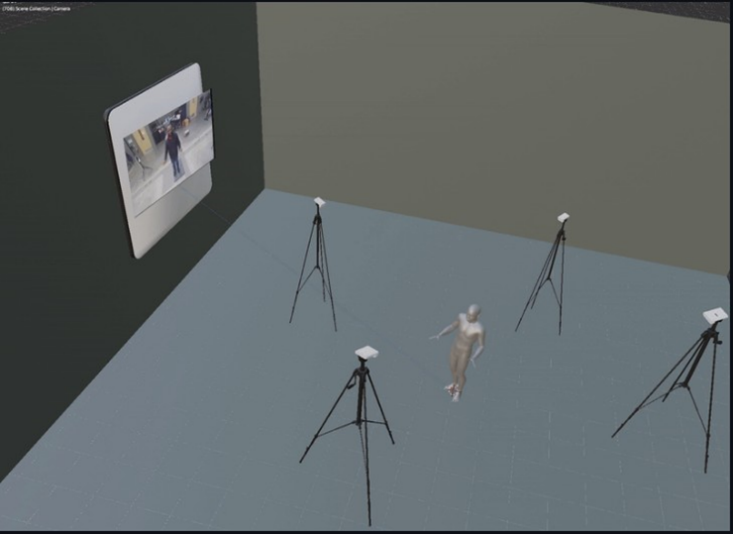

Four-panel view of the PoseMech workflow: synchronized 2D capture, mesh overlay, real-time biomechanical signals, and an open scene visualization. Use the buttons to switch plot channels.

Pipeline: Capture → 2D Keypoints → 3D Reconstruction / Mesh → Biomech Estimation → Visualization & Reports.